SonarQube vs OWASP Top Ten

In an attempt to get more familiar with SAST (Static Application Security Testing), I installed SonarQube Community Edition. I wanted to see how good or bad it was at detecting the OWASP Top Ten. I fed it the OWASP NodeGoat Project to check its performance.

OWASP itself, in it's own article on Static Code Analysis, acknowledges these limitations:

Many types of security vulnerabilities are very difficult to find automatically, such as authentication problems, access control issues, insecure use of cryptography, etc. The current state of the art only allows such tools to automatically find a relatively small percentage of application security flaws. Tools of this type are getting better, however.

False Positives

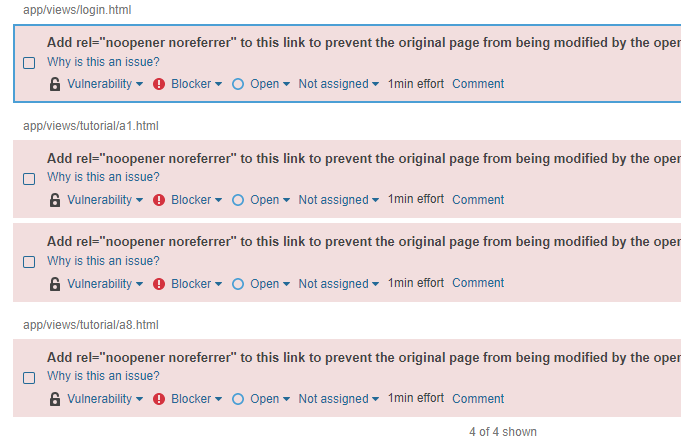

-

They flagged links with "target=_blank" as vectors for phishing attacks. Not what we're looking for at all.

-

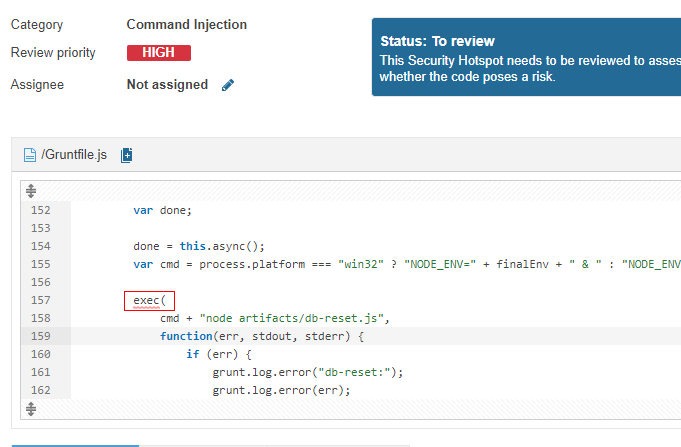

It found an

execcall in the Gruntfile. Nothing doing here.

-

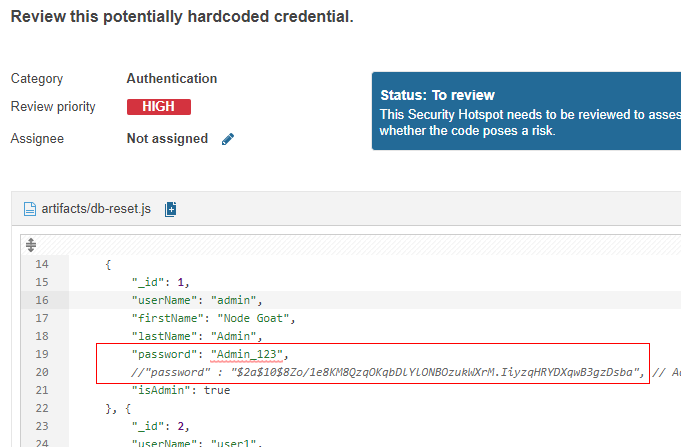

Flagged hard-coded passwords. These however were the

db-reset.jswhich merely runs when setting up the project's nosql database.

-

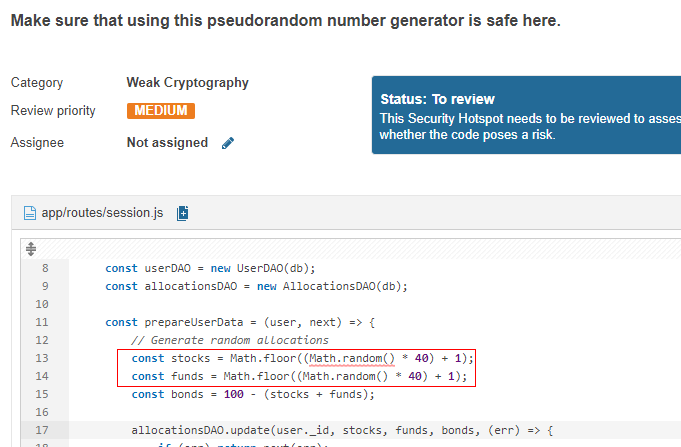

Another false positive was Math.random's psuedo-random generation, except for the fact that none of the psuedorandom data is used for encryption.

Relevant Findings

-

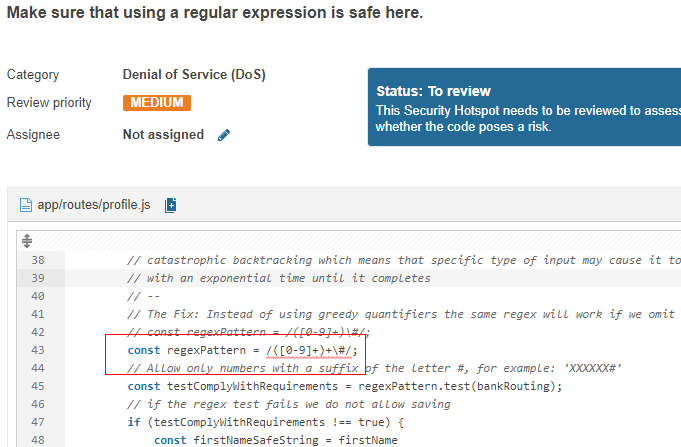

ReDOS stuff

-

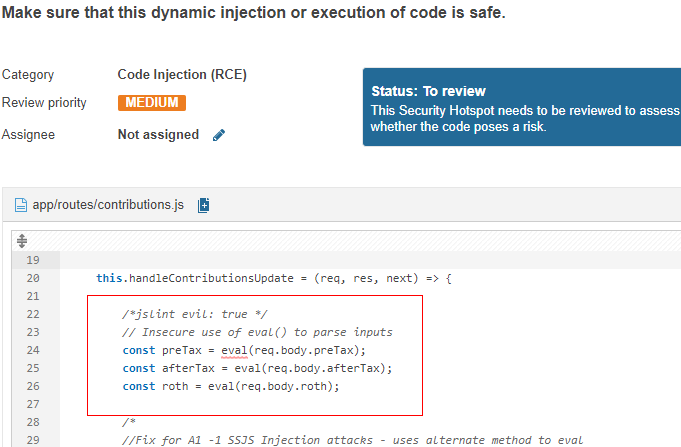

RCE

eval(Serverside Injection)

False Negatives

So NodeGoat's characterization of the OWASP Top Ten goes as follows:

- Injection [

Partially Detected by SonarQube] - Broken Auth [

Undetected] - XSS [

Undetected] - Insecure Direct Object References [

Undetected] - Misconfig [

Undetected] - Sensitive Data [

Undetected] - Access Control [

Undetected] - CSRF [

Undetected] - Insecure Components [

Undetected] - Redirects [

Undetected]

So the only relevant Top Ten risks discovered by SonarQube were the eval statements that would qualify as 1 Injection, despite totally whiffing on the nosql injection in other parts of project. The ReDOS, although relevant and placed purposefully in NodeGoat, is outside of Top Ten, but good on SonarQube for finding it.

Summary

So not a lot were found. I don't have enough experience with SAST to attribute this to a lack of configuration on my part in SonarQube itself, or because the source project is in Javascript, or something else. OWASP's own article on Static Code Analysis does say this class of tool is good with SQL injection detection. And I'm thinking the nosql injection vulnerabilities in NodeGoat evaded this analysis simply because it used mongodb.